What happens when a request arrives?

Pixies and/or gnomes not acceptable explanation

Need to understand how requests are queued, multiplexed, managed

Pixies and/or gnomes not acceptable explanation

Need to understand how requests are queued, multiplexed, managed

Lots more, but I’ve worked with these, and won’t sound as clueless talking about them.

(Yes, I know some of those technically aren’t web servers, but humour me…)

Like a city train station with multiple platforms

Each request is a train

Can handle as many trains at once as you have platforms

Conductor controls which trains go onto which platforms

If all platforms are full, trains have to queue up and wait

Service slows down

Sometimes on platform

Sometimes the trains waiting outside

BUT - A few slow trains on some platforms don’t slow other platforms down though

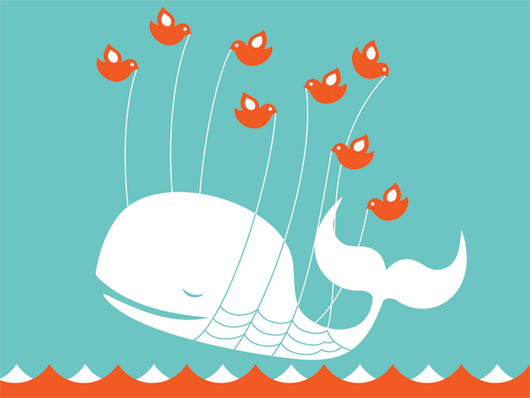

You may as well just give up and go home. You’re not getting in

Apache doesn’t do much on it’s own

But has lots of open source community activity

Many modules to plug in to do almost anything

Being on the beaten track has it’s advantages

Passenger is a module that lets you run Rails apps on Apache

Conceptually the same as Apache

Separate platforms, trains come in

Can still hang with too many long requests

But under the hood it’s all happening in the same place. Not separated at all

Ok most of the time

Can cause problems if you’re not careful to keep things separated in memory

Shared state concurrency is easy to screw up though

THIS WILL HURT YOU

private static final DateFormat dateFormatter = new SimpleDateFormat();

SimpleDateFormat isn’t threadsafe. Instance shared between all threads accessing code

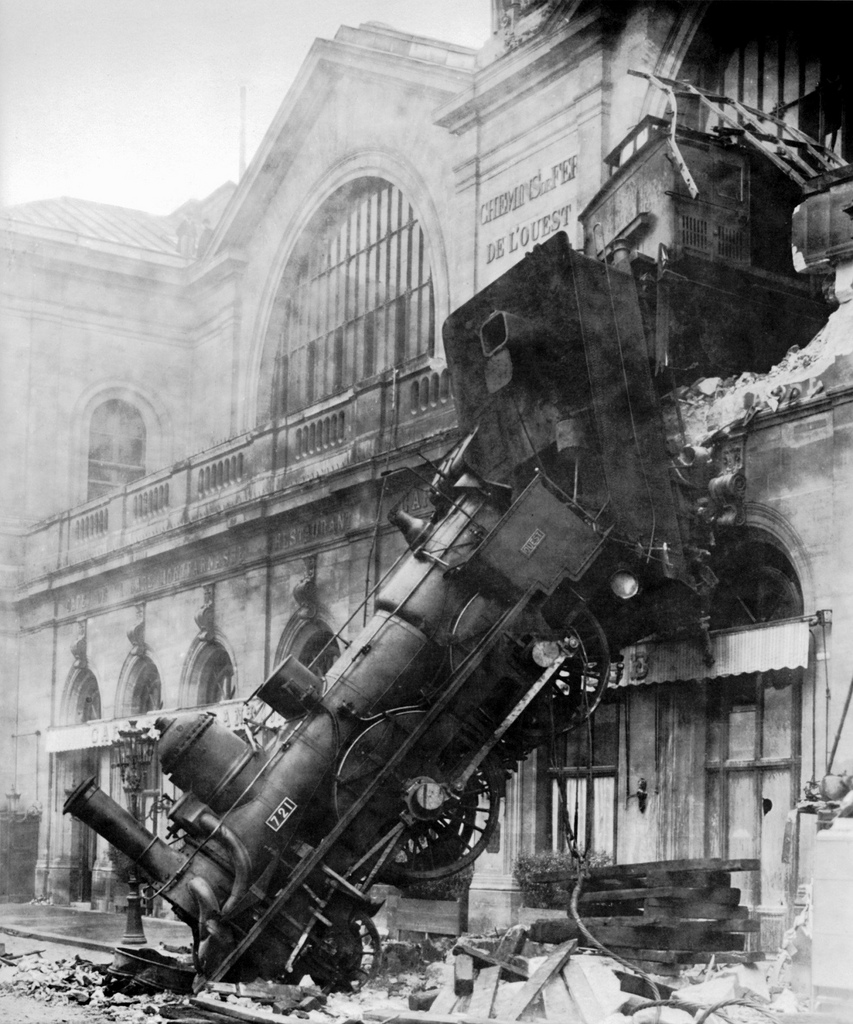

Just a matter of time till…

Well, maybe not as dramaticly. You wish

Will probably just manifest as cryptic to debug NullPointerException, or as corrupted data you don’t notice till months later…

Another concurrency model: Supermarket with lots of checkouts

Simple conceptually

Each checkout has own queue

Works well (mostly…)

Some guy paying for groceries with small change slowly

Everyone else at that checkout has to wait

If you choose the wrong queue, you’re stuffed

Suffers from “guy paying with small change”

Means more monitoring/restarting required when slow requests block it up

Restart kills all requests in queue for mongrel. Users see errors as a result

Forget about trains and pedestrians for a minute

Think about a waiter at a restaurant

Nginx hides single thread event driven model in black box

Don’t have to care about it day to day. Just use it and it works

Node puts it in your face

In theory, single waiter could serve millions of customers

Would be slow, but requires no more resource usage

Can’t do this with Apache/Tomcat style multi-process. Limit to how many “train platforms” you can build

But what if you COULD build millions of train platforms?

And do it cheaply and quickly?

And pack them into your available space really efficiently?

Lets you build millions of “train platforms”

Actually, you build a new platform for a single train

Then tear it down

All really cheaply

Another Erlang web server, similar to Mochiweb

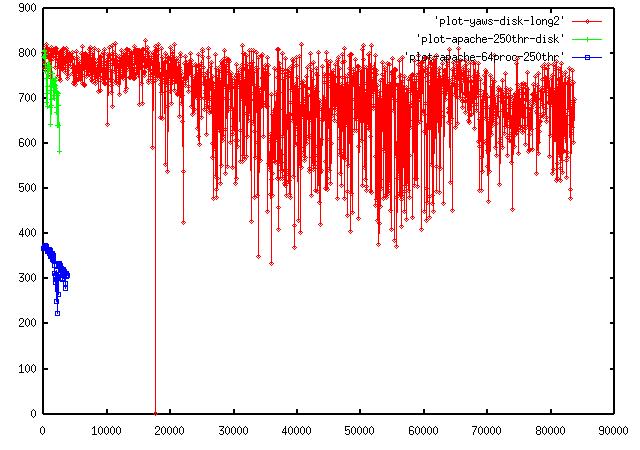

Apache dies at ~4,000 concurrent reqs. Yaws still ticking up around ~80,000.

Total throughput does not decrease as load increases.

Work done to get 1,000,000 concurrent requests on a single box with Mochiweb

http://www.flickr.com/photos/merwing/2084718669/

http://www.flickr.com/photos/hellodaniel/4161555475/

http://www.flickr.com/photos/richardluyy/4193816036/

http://www.flickr.com/photos/aerokev/4583650515/

http://www.flickr.com/photos/shinythings/2621363468/

http://www.flickr.com/photos/paulrobertlloyd/3806097796/

http://www.flickr.com/photos/learnscope/4397300890/

http://www.flickr.com/photos/obd-design/2374030181/

http://www.flickr.com/photos/90124154@N00/3157590023/

http://www.flickr.com/photos/benhosking/5076488919/

http://www.flickr.com/photos/biggolf/2231574833/

http://www.sics.se/~joe/apachevsyaws.html